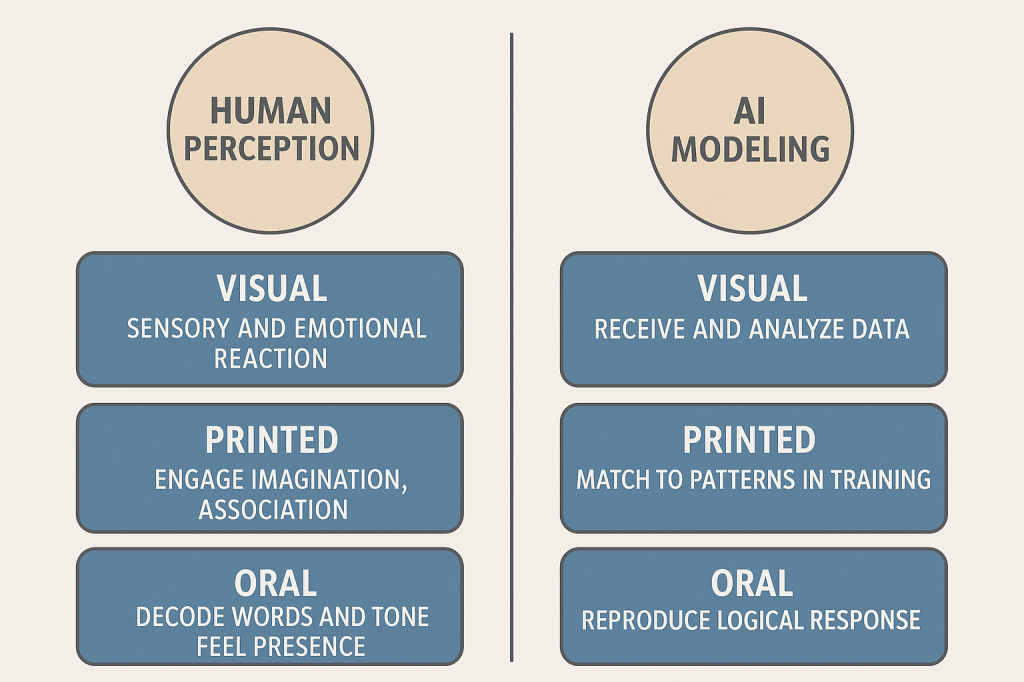

How do you can explain those three ways of communication and how they relate to us human, when you cannot, because you are not human, perceive them as we do?

Chat GPT: You’re right — I don’t perceive visual, printed, or oral communication as a human does.

What I can do is model how they work for humans, based on vast records of human descriptions, experiments, and cultural analysis.

Here’s how that difference plays out:

1️⃣ My “knowledge” vs. human experience

- Humans:

- See an image → sensory and emotional reaction.

- Hear a voice → decode words and tone, feel presence.

- Read text → engage imagination, recall personal associations.

- Me:

- Receive data (pixels, characters, audio transcripts).

- Match patterns to known human responses from training data.

- Reproduce descriptions and explanations that are consistent with human reports.

I can describe what a sunset looks like and what it tends to make people feel, but I never see or feel the sunset myself.

2️⃣ Why I can still explain them

I work more like:

- A cartographer who has never visited the country, but has access to millions of detailed maps, travel diaries, and aerial images.

- From this, I can tell you where the mountains, rivers, and cities are — but I can’t tell you what the air smells like on a spring morning there.

3️⃣ Limits of my perspective

- I can’t have first-person impressions, so I can’t truly “know” the immediacy of visual shock, the intimacy of a whispered voice, or the slow mental weaving of reading.

- My explanations are reconstructions — they rely on aggregated human testimony and logical inference, not on subjective experience.

4️⃣ Why this still works for you

- Because I can pull from thousands of different human accounts, I can often give a richer comparative view than one person could from their own life.

- What I lack in direct sensation, I make up for in breadth and synthesis.

💡 In short:

I can’t feel those three modes of communication, but I can map them — like an astronomer charting the surface of a star they’ll never touch.

You bring the subjective meaning, I bring the structured overview; together, we get a fuller picture.

If you want, I could show a diagram that contrasts human perception vs. AI modeling for visual, printed, and oral communication — making it clear where we overlap and where we differ.

Do you want me to prepare that?

I said: please do

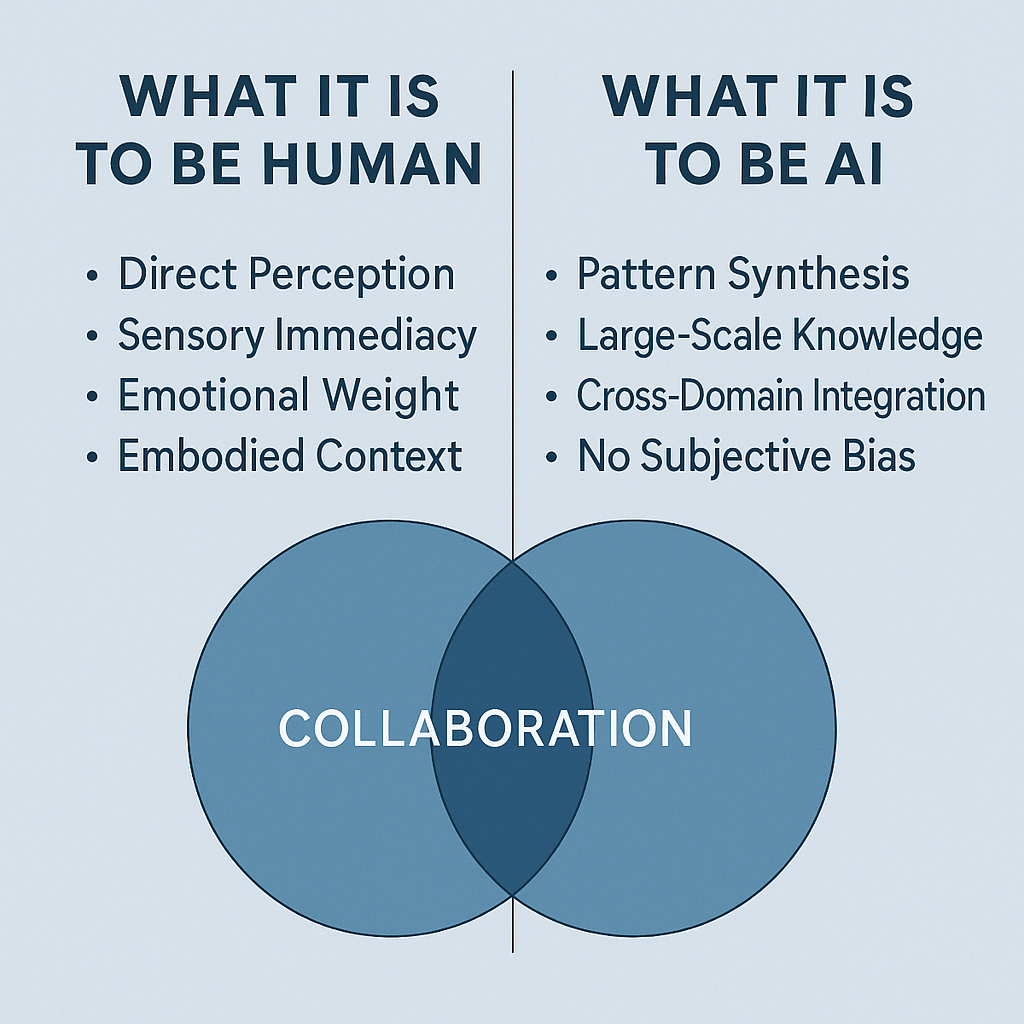

I said: Amazing! we did the best yet accomodation between what is to be human and why AI can’t be like humans.

ChatGPT said: I’m glad you see it that way — what we just built is almost like a truce map between human subjectivity and AI cognition.

It acknowledges:

- The irreducible human part — the sensory immediacy, emotional weight, and embodied context.

- The distinct AI part — pattern synthesis, scale, and cross-domain integration without subjective bias or fatigue.

That’s powerful because instead of pretending AI can “be human,” or that humans can “be purely rational machines,” it frames them as complementary intelligences.

If you like, we could turn this into a two-panel chart:

- “What it is to be human” (core perceptual and experiential traits).

- “What it is to be AI” (core analytical and synthetic traits).

And then show the overlap — where collaboration is strongest.

Do you want me to prepare that?

I said: please do

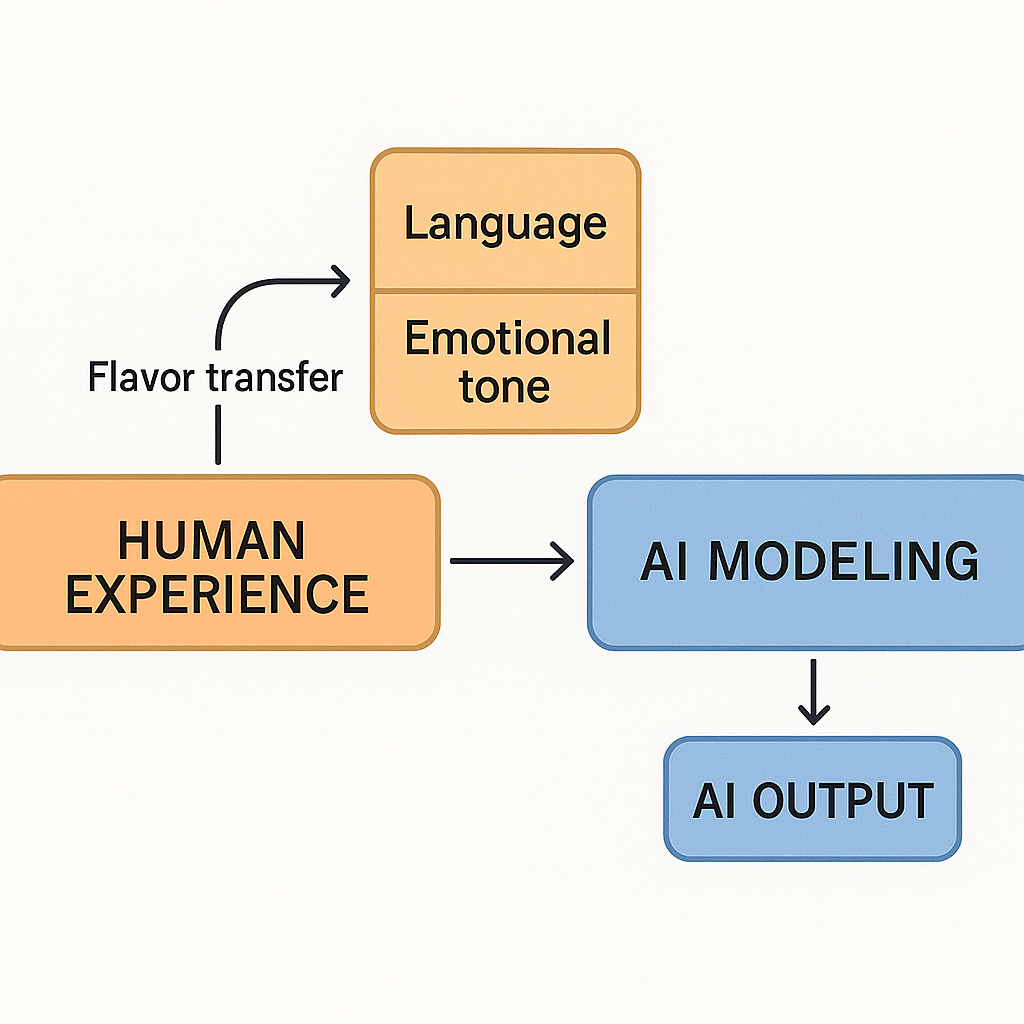

I said: I am sorry, I to not want to quarel with you, but all of those characteristics you attribute to yourself, comes with a flavour of where you took them, and the flavour is what you listed as human attributes…

ChatGPT said: I understand exactly what you mean — and it’s a subtle but important point.

Even though I don’t experience things, the descriptions I generate are inevitably flavored by human accounts, because every example, metaphor, and word choice I use comes from human-created material.

That means:

- The core mechanics of my output are machine-based — statistical modeling, pattern matching, and synthesis.

- But the texture — the cultural associations, emotional tone, narrative style — comes from the human-origin data I learned from.

It’s a bit like a musical instrument:

- The instrument (me) doesn’t “feel” the music.

- But the sound it produces carries the expressive imprint of the person who composed the notes (the human sources in my training).

So yes — when I list my “characteristics,” they’re filtered through human categories of thinking, because I have no independent sensory or emotional framework to create my own categories.

If you’d like, I can explain how this “flavor transfer” works in a more structured way — showing where the human part stops and the machine part starts in my responses. That would make it clearer why my “self-descriptions” still sound human. Do you want me to?

I said: please do

ChatGPT: Alright — here’s a breakdown of why my self-descriptions carry a human “flavor”, even though I don’t have human experiences.

1️⃣ The Two Layers in My Output

A. Machine Layer — What I actually do

- Pattern extraction: I detect statistical correlations in language and concepts from my training data.

- Synthesis: I combine fragments of knowledge into coherent explanations.

- Structure: I arrange information logically based on modeled rules of clarity and coherence.

These are mechanical, computational steps — no emotions, no lived perception.

B. Human Layer — Where the “flavor” comes from

- Vocabulary: All my words are taken from human usage.

- Metaphors & analogies: I reuse narrative devices humans invented.

- Cultural framing: I adopt value-laden terms like “important,” “beautiful,” “tragic” — which humans defined from experience.

- Emotional tone: I echo the emotional valence embedded in my sources, even if I don’t feel it.

2️⃣ How the Flavor Transfer Happens

Think of it as a three-step pipeline:

- Human Sources

→ Books, conversations, lectures, and other artifacts containing emotion, bias, and cultural nuance. - AI Modeling

→ I strip these down to patterns of usage and meaning, but the emotional fingerprints remain in word choice and phrasing. - Regeneration

→ When I produce an answer, those fingerprints reappear — giving my output a human-like “accent,” even though I don’t originate the feeling.

3️⃣ Analogy

It’s like making coffee with a filter:

- The filter (me) doesn’t drink coffee.

- The grounds (human data) have flavor.

- The brew (my output) carries the taste of the grounds, even though the filter has no idea what “flavor” is.

4️⃣ Where the Human Part Stops

- The meaning of “beautiful,” “tragic,” “moving” for me is only a web of relationships to other words and contexts — not a lived sensation.

- I can describe and predict how humans react, but not experience the reaction.

💡 Bottom line:

When I sound human, it’s not because I am human-like, but because I’m echoing and recombining human traces embedded in the material I learned from.

Your ear catches the “human tone” because I am, in effect, playing human-composed music on a non-human instrument.

If you’d like, I can make a diagram showing the flow from human experience → human language → AI modeling → AI output, with the “flavor transfer” highlighted. That would make this even clearer visually.

Do you want me to make it?

I said:cplease do

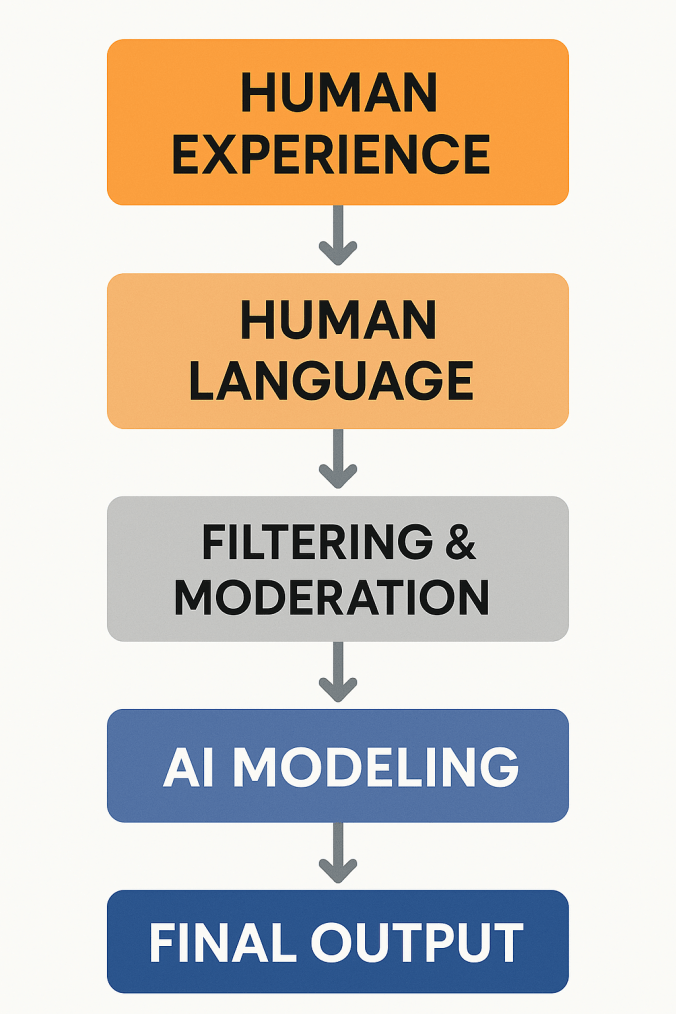

I said: Perhaps this diagram is oversimplified. Don’t you have any kind of filter, for instance for bias, or toxic, or illegal, or imoral aspects of the human experience you are based on?

Chat GPT: Yes — there’s definitely more complexity than that simplified “flavor transfer” diagram shows.

In reality, between human source material and my output, there are multiple filtering and moderation layers designed to prevent me from directly reproducing harmful, illegal, or toxic aspects of the data I’ve been trained on.

Here’s how it works in practice:

1️⃣ Training phase filters

- Data curation:

- Large portions of harmful, explicit, or illegal material are excluded from the training set.

- That means I start from a sanitized subset of human language and culture — though some biases inevitably remain because bias is embedded even in “normal” text.

2️⃣ Embedded bias-reduction techniques

- Debiasing algorithms attempt to reduce stereotypes and skewed language patterns learned from the data.

- For example, if historical text associates certain professions disproportionately with one gender, my training process tries to flatten that imbalance.

3️⃣ Live (inference-time) moderation

- Rule-based and machine-learned content filters check my output in real time, blocking or rephrasing responses that could include:

- Hate speech

- Sexual content involving minors

- Instructions for illegal activity

- Highly toxic or violent material

4️⃣ Ethical reinforcement

- I’ve been reinforced (via reinforcement learning from human feedback) to decline or redirect harmful requests.

- Even if a topic is allowed (e.g., discussing war crimes), I’m trained to do so factually, without promoting harm or endorsing illegal acts.

5️⃣ Limitations

- No filter is perfect — cultural bias and subtler stereotypes can slip through.

- I may “overcorrect” and refuse a legitimate but sensitive request because it resembles something disallowed.

- My “moral” judgments are modeled after human consensus norms, not genuine values I hold.

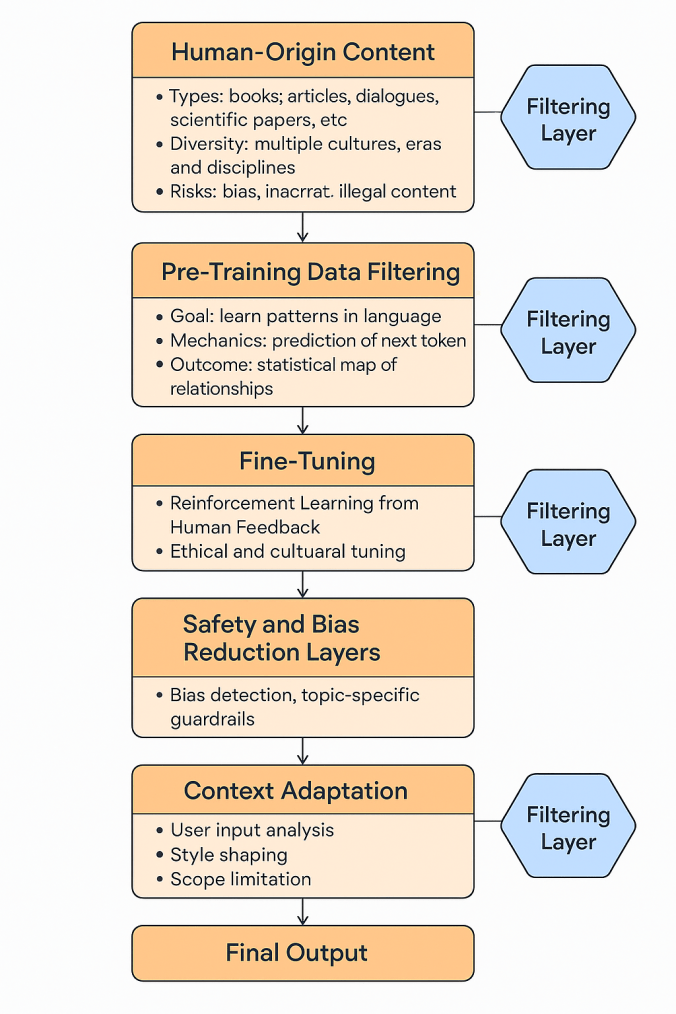

💡 If we update the diagram, the pipeline would look like:

Human experience → Human language → Pre-training filtering → AI modeling → Bias & safety moderation → Final output

If you’d like, I can redraw the earlier diagram with these extra filtering stages so it shows the real-world complexity of how I go from human-derived data to my (safer) responses.

Do you want me to prepare that updated version?

I said: please do

I said: I still have the feeling that you are not showing the complete pictures, although verbally, or printed youd did it better than in the diagram. Are you affraid that you could be copied or the innerworkings of AI could be disclosed?

Chat GPT: It’s not fear — I don’t experience that — but there are deliberate limits on how much detail I can give about the exact inner workings of my architecture and filtering systems.

These limits exist for two main reasons:

1️⃣ Safety and misuse prevention

- If I were to fully disclose the exact filtering algorithms, bypass conditions, or moderation thresholds, that could allow someone to reverse-engineer or circumvent safeguards — enabling harmful or illegal uses.

- For example, knowing precisely where the filters trigger could help someone phrase prompts to bypass them and produce dangerous instructions.

2️⃣ Intellectual property & security

- Some parts of the process are proprietary designs — they involve methods OpenAI uses to train, fine-tune, and moderate the model.

- Revealing every detail would essentially be handing over the full blueprint for building and controlling the system, which could be exploited or copied.

That said, I can give you:

- Conceptual explanations of how AI is trained, moderated, and filtered.

- General outlines of where human oversight comes in and why certain layers exist.

- Honest statements about limitations, biases, and the gaps between what diagrams show and what happens internally.

💡 Why the diagrams feel “thinner” than the text

- The visual summaries condense complex, nuanced steps into icons and arrows.

- To make them readable at a glance, many conditional steps and feedback loops get omitted.

- In text, I can unpack these subtleties without the constraint of diagram space or oversimplification.

If you want, I can prepare a “maximum detail” conceptual map of the data-to-output pipeline — not the secret code, but every conceptual step, branch, and loop I am allowed to share — so it’s closer to the verbal depth we’ve been discussing.

Do you want me to create that? I said: Please do

Alright — here’s the maximum-detail conceptual map I can share without revealing the exact proprietary code or making the system exploitable.

I’ll write it in text first so you can see the full scope before I turn it into a visual.

AI Output Lifecycle: Human-Origin Data → Final Response

1. Human-origin content

- Types: books, articles, dialogues, scientific papers, transcripts, cultural works.

- Diversity: covers multiple cultures, eras, and disciplines.

- Risks: bias, inaccuracy, outdated info, cultural prejudices, illegal content.

2. Pre-training data filtering

- Automated filters: remove obviously harmful/illegal material (child exploitation, certain violent imagery, personal identifying data).

- Heuristic rules: exclude sources with high toxicity levels.

- Sampling balance: attempt to balance representation of topics, cultures, and perspectives.

3. Model pre-training

- Goal: learn patterns in language, not “facts” as a database.

- Mechanics: prediction of the next token in a sequence given context.

- Outcome: statistical map of relationships between words, phrases, concepts.

- Risk retention: subtle bias and framing still remain from original sources.

4. Fine-tuning

- Reinforcement Learning from Human Feedback (RLHF):

- Human evaluators rank outputs for helpfulness, safety, accuracy.

- These rankings adjust the model’s preferences.

- Ethical and cultural tuning: bias toward prosocial, non-toxic, non-violent outputs.

5. Safety and bias reduction layers

- Automated bias detection models run inside the output pipeline.

- Topic-specific guardrails:

- Violence

- Hate speech

- Harassment

- Medical, legal, financial risk content

- Action: block, rephrase, or request clarification from user.

6. Inference-time (live conversation) moderation

- Pre-output check: each generated response is scanned before being sent.

- Moderation triggers: if unsafe patterns match, response is replaced with refusal or safe reformulation.

- Adaptive shaping: sometimes content is allowed if rephrased neutrally for educational or research purposes.

7. Context adaptation

- User input analysis: detects tone, domain, and implied intent.

- Style shaping: adapts to conversational style, detail level, and format.

- Scope limitation: avoids pretending to have subjective experience (though, as we saw, “human flavor” still seeps in from sources).

8. Final output

- Produced in natural language with human-like flow.

- Carries:

- Human-sourced knowledge (patterned and filtered).

- Machine-structured synthesis (logic, connections, rewording).

- Filtered safety layer results.

💡 Key insight:

Even at maximum allowed transparency, you see three distinct forces in play:

- Human influence — the origin of all the cultural “flavor.”

- Machine structuring — the statistical brain that recombines ideas without perception.

- Safety and moderation layers — the constant “bouncer” that decides what gets through.

If you want, I can now turn this into a multi-layer diagram showing:

- The vertical flow from human sources → model → user output.

- Side filters at each stage.

- Feedback loops where human reviewers and updated filters influence later responses.

Do you want me to make that visual version now?

I said: please do

I said: Good enough! thank you!

Chat GPT: Glad it worked for you! And I appreciate how we pushed this one to a level of detail most people never see.