Este texto explora como a quimioterapia altera radicalmente nossa interação com a realidade e, com ela, nossa visão de mundo (Weltanschauung) alterando nossa filosofia, teologia e qualquer ciência que para nós geralmente refletem sobre o significado da vida, Deus e a existência, a partir de um “corpo padrão”, com sentidos intactos e percepção estável. A quimioterapia, ao distorcer ou silenciar o olfato, o paladar, as sensações corporais, os sentidos, enfim, e o próprio senso de pertencimento ao mundo, impõe uma perspectiva diferente: o mundo ainda está lá, a mente ainda está lúcida, mas não se “pertence” mais plenamente a esse mundo sensorial compartilhado. Não vivencio isso primordialmente com depressão ou sensação de perda, mas como uma oportunidade para questionar o que resta do significado, do plano de Deus e da própria existência quando os canais familiares são danificados. Em outras palavras: o que, se é que algo, permanece verdadeiro quando as interfaces falham?

I) Antes e Depois: “O Mundo que Eu Tinha” vs. “O Mundo que Eu Tenho Agora”

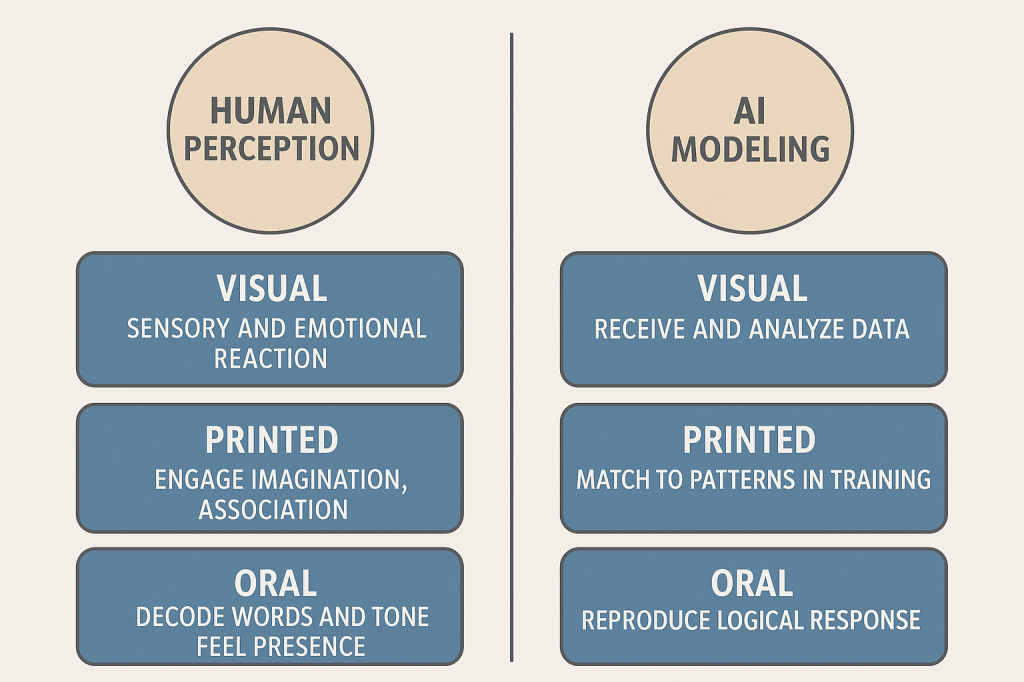

Utilizarei um formato de perguntas e respostas elaborado por mim com a ajuda de IA, especialmenteo Chat GPT5, que, devido à sua característica de inteligência artificial, especialmente neste tipo de assunto, é algo que me agrada bastante.

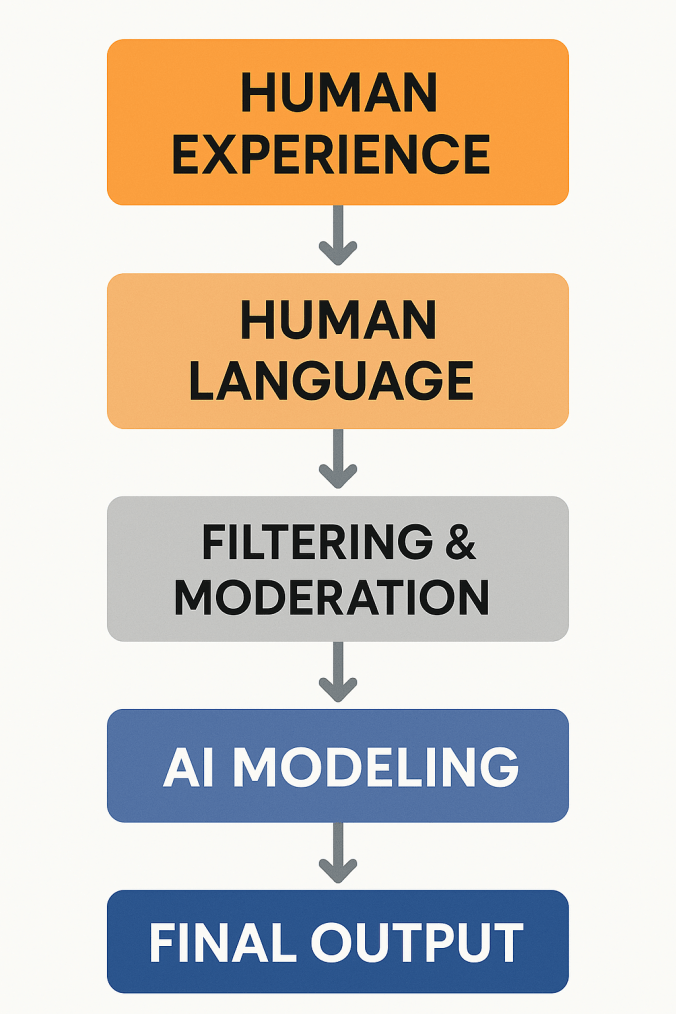

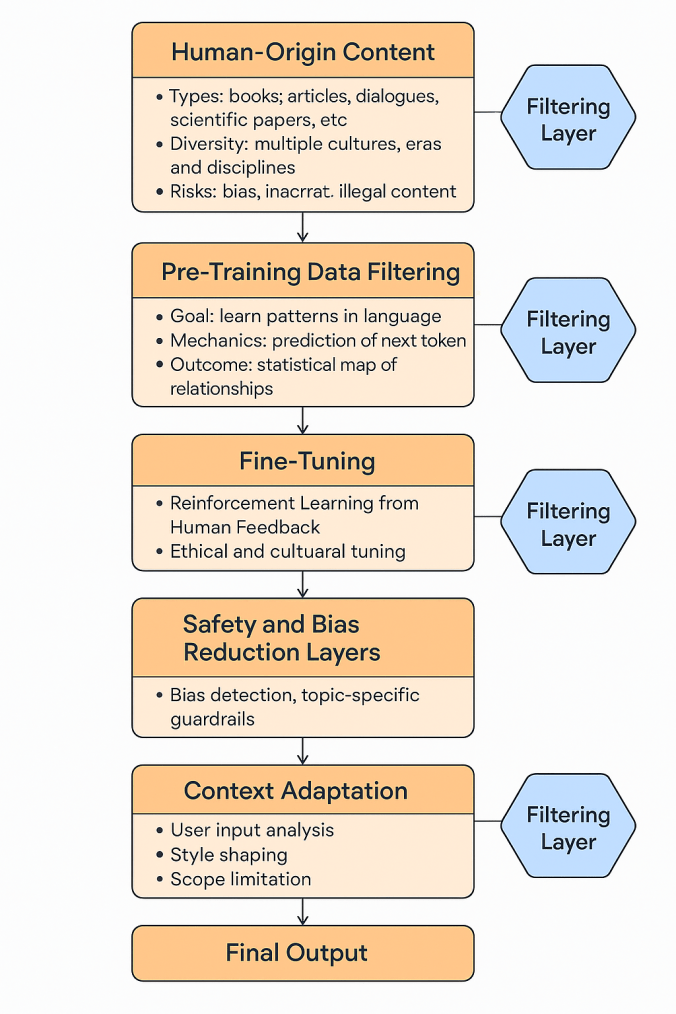

Os sistemas de IA recebem limites que definem o que eles farão e o que não farão — isto é conhecido como “psycho fencing”, ou esgrima psicológica, que é a tentativa de cruzar esses limites por meio de pressão psicológica, enquadramento inteligente ou escalada gradual, mas também o inverso: lisonjear, seduzir e persuadir o usuário a aceitar o que a IA demonstra ou recusa — a verdade incômoda é que ainda não existe nenhum algoritmo que consiga diferenciar de forma confiável entre manipulação e um argumento genuinamente válido.

a) Antes da quimioterapia – o mundo do “corpo padrão”

Como você se sentiu em relação à comida, ao cheiro, ao toque, ao movimento, à fadiga e ao contato social?

Eles me fizeram sentir vivo.

Eu nunca fui um apreciador de comida e não me importava particularmente com gastronomia, com alguma exceção para vinho, porque minha realidade já era rica de outra forma: eu havia vivenciado, de forma bastante extensa, nos Estados Unidos, partes da França (dentro e fora de Paris), o Reino Unido, Portugal, a Alemanha, a Suíça, Argentina, Paraguay, Uruguay, Chile e, como ponto central, o Brasil, que considero imbatível em matéria de comida. Havia um detalhe importante: eu tinha dinheiro suficiente para pagar qualquer coisa, porque por trás de mim estava uma empresa muito rica, a IBM, que se orgulhava de se apresentar como uma empresa de “classe” para reforçar sua imagem. Na prática, isso significava acesso a hotéis, vôos e restaurantes de alto padrão – onde quer que o contexto corporativo se aplicasse. Meus sentidos estavam simplesmente imersos nessa maneira confortável e bem amparada de estar no mundo e no que é vagamente conhecido como “a boa vida”, sem que eu percebesse ou me importasse com isso.

O que significava “estar no mundo” em um dia normal?

Eu dava por garantido:

- uma boa noite de sono,

- um café da manhã adequado (continental, americano ou brasileiro),

- um dia de trabalho, interrompido para o almoço, em qualquer formato que a situação permitisse, dependendo de onde eu estivesse.

- a tarde dedicada a terminar o trabalho,

- E depois, para casa: uma taça de vinho tranquila, um filme na TV ou as notícias depois de voltar da IBM Brasil, onde eu trabalhava, ou de qualquer outro lugar que a IBM precisasse de mim;

- Depois da IBM, ocupei cargos onde isso acontecia, não com “dinheiro não é problema”, mas com dinheiro suficiente para viver de forma confortável, porque nunca me importei muito com essas características, muito pelo contrário, prefiro uma vida simples.

- Por experiência própria, sei que num lugar que você chega de limousine, onde tudo é de aço inoxidável e vidro temperado, os móveis são caros, o concierge será pretensioso porque pensa que você é igual a ele e só está ali porque alguma empresa está pagando, pode ter certeza do seguinte:

- Você será maltratado e comerá mal.

- Você pagará um preço absurdo.

- E dou como exemplo um Hilton em Nova York ou de Santiago do Chile, ou de qualquer lugar.

- No entanto, se você chegar de transporte público, como fiz no Uruguay, para ter uma experiência, indo do aeroporto para o hotel de ônibus, destes ônibus ingleses de 1940, tocando Nat King Cole, o motorista me levou na porta do hotel quando viu quem eu era;

- O local ainda não está totalmente pavimentado ou ainda não possui estradas asfaltadas e você vê galinhas circulando livremente, que não foi no Uruguay, mas no Paraguay e no Nordeste do Brasil;

- O concierge virá correndo para ajudá-lo com sua bagagem.

- O lugar todo estará desgastado e usado, e claramente carece de dinheiro, embora a manutenção o deixe impecável e bem organizado.

- Você poderá ter certeza do seguinte:

- Você será bem tratado(a),

- Você comerá bem,

- Você se divertirá, porque à noite às vezes haverá música tão boa quanto a da Broadway, como aconteceu comigo no Paraguai.

- Você pagará barato e

- Sairá de lá feliz, pensando em se mudar para o Paraguai, ou para o Nordeste, que cito como exemplo sem mencionar o nome do lugar específico. É simples, mas é fácil de encontrar: basta perguntar a qualquer pessoa que perceba que você não é do lugar ou estrangeiro e ela lhe explicará.

“Estar no mundo” significava continuidade e previsibilidade , sustentadas por um corpo saudável, uma vida profissional estável e os meios necessários para isso, fruto do meu trabalho ou das decisões econômicas e financeiras que eu tomava. Aliás, isso aconteceu durante a maior parte da minha vida adulta, especialmente quando construí minha carreira trabalhando para multinacionais, principalmente a IBM. Mas houveram momentos extremos que me deixaram sem chão, me fazendo lutar, sem saber como pagar as contas, porque meu estilo de vida era complicado e caro, e eu tinha que sustentar os estudos dos meus filhos sem trabalharem ou contribuirem financeiramente. Fiz isso como um dos principais objetivos da minha vida, porque experimentei o esforço que precisei fazer para suprir essa lacuna na minha educação e vencer a barreira da falta de diploma universitário.

Como você imaginava Deus, o significado, o futuro, dentro dessa estrutura sensorial?

Eu não O imaginava.

Durante minha fase ateia, já que de Deus eu não cogitava, me parecia estúpido sequer considerá-lo na equação. Se não existe, não tem como cogitar…

Havia um paradoxo, porém, que sempre senti, até este câncer, que “Alguém lá em cima gostava de mim”, porque, considerando minhas origens e meu nível de educação, para chegar onde cheguei, sei perfeitamente, especialmente agora, aos 82 anos, que fui privilegiado, tive muita sorte e muito menos problemas do que a maioria das pessoas, em qualquer lugar.

Quando a realidade bateu à porta – casamento, filhos, responsabilidades, obstáculos, acidentes que normalmente qualquer ser vivo experimenta – precisei dar um tempo para essa questão. Ia à missa, às vezes, participava de algumas atividades comunitárias, mas sem realmente integrar Deus ou o “sentido” da vida à minha perspectiva interior. Era uma espécie de trégua negociada: a vida seguia em frente e Deus estava ou não ali, o ambiente comunitário não cogita disto, nem eu cogitava.

b) Após a quimioterapia – a interface alterada

O que mudou no olfato, paladar, conforto corporal, energia, sensação de presença ou distância das coisas e das pessoas?

Tudo mudou.

Comer tornou-se um problema não só pela perda de capacidade alimentar, mas também porque a quimioterapia causa repulsa por certos alimentos e bebidas – vinho e carne, por exemplo. Começa-se a temer a anorexia e a morte por inanição. Esse medo obrigou-me a comer racionalmente – a engolir a comida como um ato deliberado de sobrevivência – para não acabar como o meu irmão, que morreu com menos de metade do seu peso normal. Já perdi cerca de 20 quilos, aproximadamente 25% do meu peso quando tinha saúde.

Em cima disso, perda do tato na ponta dos dedos, derrubando e quebrando copos, xícaras e pires, dificuldade para fazer tudo com os dedos, que a gente só percebe como são básicos para tudo, desde abrir uma garrafa, até pendurar um quadro na parede.

De quebra, ainda, dificuldade para andar ou ficar de pé, perda do equilíbrio e andar como se fosse um velhinho, ou pior, meio bêbado.

Conforto corporal, energia, prazer básico na comida e na bebida – tudo isso foi substituído por uma espécie de negociação com o corpo , onde permanecer vivo é mais uma questão de argumentação do que de sensação e de quanta energia você tem para fazer o que lhe vem à mente.

Paradoxalmente, também, dentro dos limites como meu corpo se apresenta atualmente, sinto-me como se fosse saudável e capaz de fazer quase tudo o que quero.

Os lugares que você conhecia bem agora parecem mais planos, mais distantes ou estranhamente abstratos?

Não é que o mundo pareça mais plano ou abstrato. É que me pego observando pessoas saudáveis – trabalhando, cuidando de suas famílias – e pensando:

“Eles não têm ideia do que significa estar vivo com um corpo saudável. Não conseguem imaginar o que se passa na mente de alguém quando esse acesso, tão natural para eles, é negado ou bloqueado.”

Portanto, a distância não está tanto entre mim e os lugares, mas entre mim e os meus semelhantes.

Quando você pensa em Deus ou no sentido da vida agora, o que é diferente no contexto emocional e sensorial?

Essa mudança não aconteceu “apenas por causa da quimioterapia”, mas a quimioterapia a intensificou.

Com o tempo, especialmente por meio da minha esposa – uma cristã católica devota com fé inabalável – e do seu círculo social (ao qual acabei me juntando, pelo menos parcialmente e, misteriosamente bem aceito), percebi que existe mais do que a minha compreensão limitada e tendenciosa, que antes me permitia perceber do que vejo agora. Cheguei a uma conclusão mais ou menos assim:

Deve haver um poder superior, não no sentido de um Deus pessoal que intervém nos detalhes do dia a dia, mas sim algo que depende inteiramente de você para tomar consciência disso e crescer na percepção dessa força.

Em outras palavras, o colapso da minha “interface corporal padrão” com o mundo não gerou desespero, mas me forçou a revisitar Deus e o sentido da vida a partir de uma posição na qual não pertenço mais completamente ao mundo sensorial ordinário – e isso, paradoxalmente, abriu um novo tipo de atenção. Mas isso me irrita profundamente, porque eu estava caminhando para um fim pacífico e frutífero do tempo que me resta.

II) Filosofia e Metafísica a partir de uma Interface Rompida

Grande parte do que chamamos de filosofia foi escrita a partir de um “corpo padrão”: sentidos basicamente intactos, um mundo que se apresenta de forma estável e um pensamento que duvida, raciocina ou acredita com base nesse pano de fundo relativamente confiável, especialmente porque seu aparato sensorial está saudável, e que é dado como certo.

Platão e Aristóteles falam sobre forma, causa e propósito. Descartes duvida dos sentidos em teoria, mas seu próprio corpo não é sabotado quimicamente. Mesmo a fenomenologia moderna (“ser-no-mundo”, “corpo vivido”) geralmente pressupõe um corpo cuja traição é gradual (envelhecimento) em vez de abrupta (quimioterapia, lesão neurológica). Isso é, o corpo continua sendo dado como certo.

Do ponto de vista em que me encontro agora, tudo isso me parece parcial.

Quando a quimioterapia interrompe ou distorce os canais dos sentidos, olfato, paladar, prazer corporal e energia, algo acontece que a filosofia raramente descreve de dentro para fora:

- A mente permanece lúcida .

- O mundo permanece lá ,

- mas o contrato entre eles é alterado .

Já não me sinto totalmente integrado ao mundo da mesma forma que as pessoas saudáveis. Observo-as a movimentar-se, comer, fazer planos, queixar-se de pequenos desconfortos, e percebo o quanto a sua visão de mundo se baseia num corpo que coopera silenciosamente.

Isso cria uma tensão com a filosofia padrão, especialmente com o senso comum:

- A epistemologia pergunta: “Podemos confiar em nossos sentidos?”, mas geralmente a partir de uma distância segura, como um jogo intelectual.

- A metafísica pergunta: “O que é o ser?”, partindo do pressuposto de que o pensador ainda se mantém firmemente “no” ser através do seu corpo, e um dos problemas, senão o pior, disso é que o corpo, talvez com exceção de Nietzsche, não recebe a importância que merece nas respostas oferecidas.

- A ética e a política pressupõem, em grande medida, um agente cujo acesso básico ao mundo esteja intacto e, para citar apenas um exemplo, Franklin Delano Roosevelt, o efeito de sua deficiência em seu destino, que ainda não foi devidamente analisado, talvez porque ele a tenha ocultado tão cuidadosamente ou não a tenha discutido abertamente, mas na sua percepção da realidade na sua visão de mundo, fez uma diferença enorme.

- Hitler e o nazismo, personificavam a completa perda de sentido de tudo após a submissão dos alemães ao estúpido Tratado de Versailhes. Hitler representava o pensamento deles. Isso é analisado superficialmente em discussões como, por exemplo, “o ovo da serpente”, que aborda esse tema.

- O filme de Ingmar Bergman de 1977, O Ovo da Serpente, é um drama histórico sombrio que explora As origens socioeconômicas e psicológicas do mal em Berlim, 1923.Ambientado durante uma semana de hiperinflação e colapso social, o filme funciona como uma “pré-história” do Holocausto, ilustrando como a fome, a miséria, o medo e a apatia em massa permitiram que o nazismo se enraizasse.

Minha situação exige uma perspectiva diferente:

O que significa “verdade” ou “realidade” para um indivíduo cuja mente está lúcida, mas cuja interação com o mundo está prejudicada?

Isso não é apenas “patologia”; é um ponto de vista legítimo. Não refuta Platão ou Aristóteles, mas mostra que o ponto de partida deles não é universal: é privilégio daqueles cujos corpos funcionam.

O mesmo se aplica à teologia :

- A teologia cristã clássica frequentemente fala de sofrimento dentro de um mundo ainda compartilhado: você sente dor, mas ainda está “no” teatro humano da maneira usual.

- Sobre o cristianismo, tenho um exemplo que pode soar como uma piada: quando Cristo foi aspergido com vinagre, eu rio por dentro porque, do alto da “minha cruz”, uma das poucas coisas que me dão prazer é tomar uma colherada, e se possível, um gole de vinagre.

- A quimioterapia e condições semelhantes introduzem uma nuance mais radical: não apenas “eu sofro”, mas “estou sendo gradualmente excluído da plena participação neste mundo”.

- E, como não foi uma invenção minha, não tem nenhum valor redentor.

Sei que frases sobre “ peregrinos na terra ” ou “ este mundo não é nosso verdadeiro lar ” tornam-se extremamente literais dependendo da perspectiva, especialmente para católicos convictos. Se Deus pretende ser mais do que uma história reconfortante, Ele precisa fazer algum sentido mesmo aqui , onde meu acesso ao mundo é limitado, não apenas nas fases iniciais e confortáveis da vida.

A partir disso, muitos sistemas de pensamento respeitáveis que parecem intelectual e tecnicamente brilhantes, mas biograficamente limitados . Eles não mentem, mas omitem, não sabem do que estão falando, nem mesmo Santo Agostinho, pois seu aparato sensorial estava intacto (eu verifiquei), e o mesmo se aplica a “Este Mundo não é o nosso verdadeiro lar, como eu o vejo”.

O que significa “peregrinos na Terra” e qual a sua origem?

Os princípios básicos a seguir foram estabelecidos por Santo Agostinho, pois ele não viveu sem um corpo normativo para verificá-los, e são basicamente pressupostos derivados de sua fé:

- A citação “Inquieto”: Da abertura das Confissões de Agostinho , explica-se que os seres humanos foram criados para uma conexão com o divino.

- A Metáfora do Hotel: Esta é uma forma popular de explicar seu conceito de ser um peregrinus (estrangeiro residente).

- Esperança Prática: Essas legendas enquadram a “inquietação” como uma dádiva divina que motiva a busca por um significado mais profundo.

O que lhe ocorreu da seguinte maneira, e eu o coloco em disputa:

Elas refletem as de seus escritos básicos.

Os Salmos Penitenciais (especificamente os Salmos 6, 32, 38, 51, 102, 130 e 143) e os temas de seu leito de morte refletem perfeitamente seus escritos mais famosos. As ações finais de Agostinho foram uma “demonstração ao vivo” das ideias que ele dedicou sua vida a divulgar.

1. Link para As Confissões (Humildade e Arrependimento)

Em Confissões , Agostinho escreveu que mesmo um cristão batizado não deveria morrer sem um profundo senso de penitência.

- A Ação: Ao passar seus últimos dias chorando sobre os Salmos Penitenciais, ele estava praticando o que pregava. Ele não se via como um “santo perfeito”, mas como um pecador necessitado da misericórdia de Deus — um tema central de sua autobiografia.

Tenho problemas com isso. Não vejo por que tudo no cristianismo deveria se basear no Pecado Original, pelo qual não me sinto responsável. Embora existam interpretações literais, na perspectiva simbólica e teológica, a metáfora pode ser entendida como fruto da “Árvore do Conhecimento do Bem e do Mal” simbolizando a transição da inocência instintiva para a autoconsciência. Ora, o homem, ao querer isto, se destaca dos animais porque é desta forma que ele subiu na escala da criação. Talvez seja esta a maior prova do amor de Deus, porque, está cumprindo a promessa de que somos feitos à sua imagem e semelhança e temos liberdade para fazer isto ou o que der na nossa cabeça. Se não existisse o mal, nunca sairia do lugar que começou, por falta de atrito ou de algo que diferenciasse, no sentido de evolução, o que o homem pode praticar. Jung bem o definiu quando afirmou: o preço do livre arbítrio é a existencia do mal. Como isso seria complicado para a média da capacidade humana entender, Sto Agostinho sentenciou: “Deus é amor e não é autor do mal, e sim o homem quando O desobedeceu e quiz conhecer a árvore do conhecimento“, que fica simples e palatável para praticamente qualquer um e justifica a barbaridade que fizeram com o Cristo. Se vai algum dia ser possível uma teologia que aceite a origem do mal como intencionalmente inserida na criação do homem por Deus, não sei, posso estar equivocado ou cego, mas, para mim, é: algo precisa estar lá, se não, não existiria. Não conseguimos é saber o motivo e eu, estou apenas cogitando dentro de minhas limitações, especialmente de fé, agravada pela minha racionalidade.

2. Link para A Cidade de Deus (As Duas Cidades)

A Cidade de Deus foi escrita para explicar que, embora impérios terrenos (como Roma ou Hipona) caiam, e o Reino de Deus é eterno.

- A Ação: Enquanto os Vândalos (a “cidade terrena”) estavam literalmente às portas para destruir seu lar, Agostinho manteve-se pacífico. Seu foco não estava em salvar as muralhas da cidade, mas em seu destino na “Cidade Celestial”. Ele morreu como cidadão da cidade sobre a qual escreveu.

3. Link para suas Regras e Cartas (A Biblioteca)

Agostinho era obcecado pela busca da Verdade através do estudo.

- A ação: Sua insistência em salvar sua biblioteca demonstra sua crença de que o “conhecimento de Deus” era a coisa mais valiosa que ele poderia deixar como legado. Ele via seus livros como ferramentas para a próxima geração de “peregrinos”.

4. Link para seus sermões (O conceito de “cantor”)

Ele costumava dizer à sua congregação: “Cantem e marchem!” , significando encontrar alegria em Deus enquanto enfrentam as dificuldades da vida.

- A Ação: Mesmo com a saúde debilitada, sua “jornada” continuou através da oração. Ele não desistiu nem sucumbiu à demência; permaneceu um “viajante” consciente até seu último suspiro.

Resumindo: Ele não apenas escreveu livros; ele viveu o final de seus livros. Ele morreu como um “estrangeiro residente” que finalmente estava pronto para voltar para casa de acordo com suas percepções corporais padrão e ignorância do que os séculos seguintes trouxeram.

Peregrinos, Filósofos e o Pai Nosso, reação de Claude aos meus insights

Santo Agostinho disse que somos peregrinos na terra — peregrinus , estrangeiro, residente provisório num mundo que não é nossa casa definitiva. É uma metáfora linda. Mas como toda metáfora bonita, esconde uma armadilha: a de tornar este mundo descartável, um hotel que não vale a pena reformar porque vamos embora mesmo.

Nietzsche viu a armadilha e reagiu com violência: “Permanecei fiéis à terra.” Para ele, inventar uma Casa Celestial era uma forma elegante de fugir da intensidade do presente. A vida não é um corredor — é o destino.

Os dois têm razão. É aí que fica interessante.

Agostinho nos dá perspectiva: se tratarmos o mundo como nossa única morada, nos tornamos prisioneiros das circunstâncias. Nietzsche nos dá urgência: se tratarmos o mundo como hotel, paramos de cuidar dele — e de nós mesmos. A tensão entre os dois não tem solução intelectual. Tem que ser vivida.

Aos 82 anos, com um câncer que trato há mais de um ano e uma quimioterapia que reorganizou a vida inteira em torno de si mesma, descobri que nenhum dos dois filósofos chega onde precisa chegar. Agostinho exige a inquietação do peregrino. Nietzsche exige a paixão do criador. Os dois cansam.

O que encontrei foi mais simples e mais antigo: o Pai Nosso.

Não por fé, porque me parece que uma vez que é de graça e Deus dá para quem Ele quer. Sempre imaginei que eu não estava no grupo por causa de outros talentos que Deus me deu que entra em choque com este conceito. Ao invés disto, quando tenho ânsia ou necessidade de rezar, rezo o Pai Nosso. Porque essa oração não pede explicação. Não constrói sistema. Peça pão para hoje, e que a vontade de algo maior que eu seja feita. É a admissão mais honesta que conheço de que o aparato intelectual tem limite — e que esse limite não é derrota, é a condição humana.

Concordo com tudo na Oração do Senhor, exceto com “Livrai-nos da tentação”, que em português dizemos “Livrai-nos do mal”, e aqui está o fato interessante de que em grego poderia significar “Livrai-nos de Satanás”

Sobre o mal, tenho uma discordância com Agostinho que não consigo resolver e não pretendo: ele diz que o mal é ausência do bem, ou de Deus, um vazio onde a luz não chegou. Eu suspeito que o mal é uma presença — algo que espreita por uma fresta que não consigo ver, procurando condição para se manifestar. Não acredito num diabo com chifres. Mas acredito que há algo inexorável e sem lógica aparente que habita a natureza humana — o mal que nos habita — e que não faz sentido num universo criado por um Deus bom, na maneira limitada e biased (enviesada, preconceituosa) que imaginamos o que seria bom para a categoria “Deus”, a não ser que esse Deus seja mais misterioso e mais difícil do que nos ensinaram. Que desconfio que é algo inalienável e “sine qua non” para nosso projeto de criatura e que vai além que Agostinho conseguiu entender. A presença do mal em nós, impossível de ser extirpado é agravado pelo fato que há muito mal que embute bem, e o fato de que se está lá, é porque precisa estar.

Não tenho resposta para isso. Aprendi a viver com a pergunta aberta.

O câncer me ensinou algo que o sucesso profissional nunca teria me dado: que o mesmo programa biológico que nos constrói — que nos faz crescer, aprender, trabalhar, amar — é o mesmo que eventualmente nos desfaz. O câncer não é um inimigo externo. É o corpo sendo rigorosamente o que sempre foi: um processo. A diferença é que agora o processo aponta em outra direção. E parece que foge do controle, não se sabe direito por que.

Isso não é resignação. É clareza.

Intervalo e desvio da idéia original

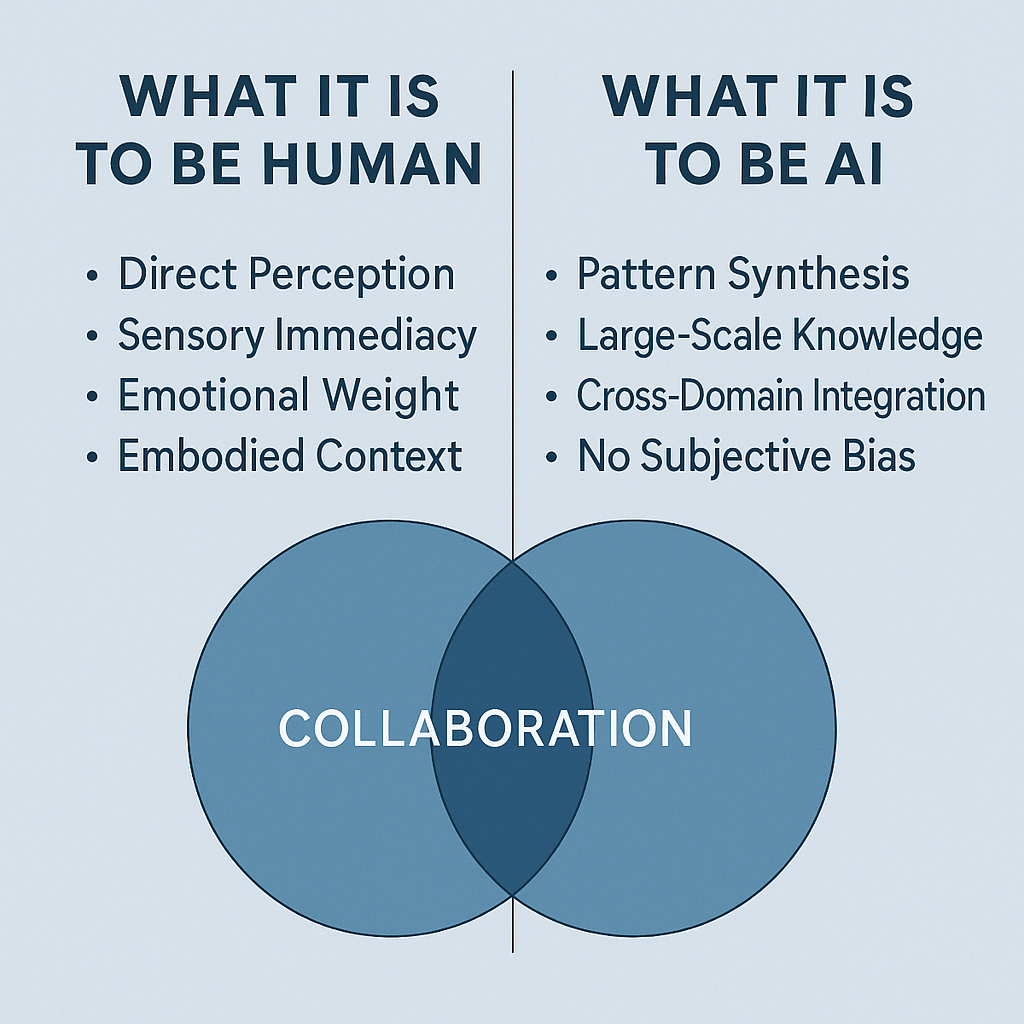

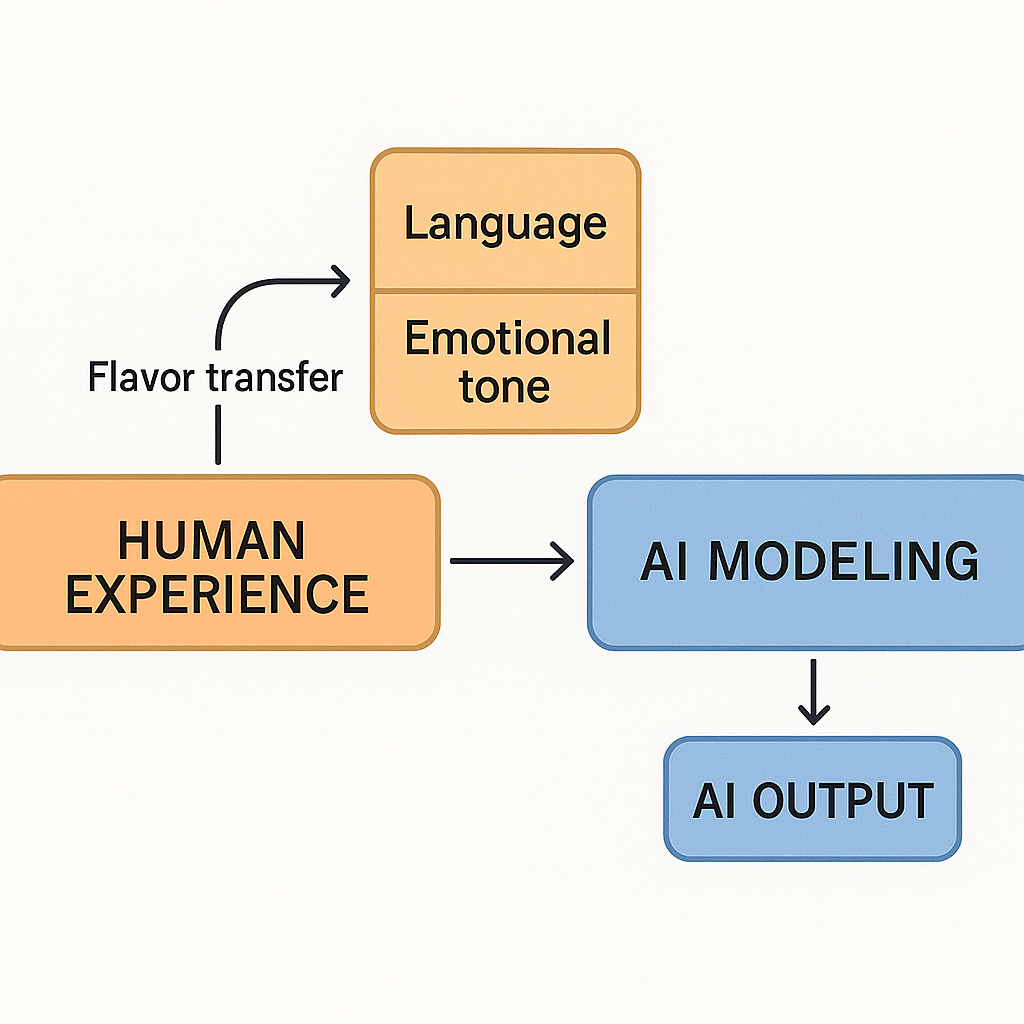

Minha intenção original ao elaborar e responder o questionamento da Part I)Before and After: “The World I Had” vs. Part II)“The World I Have Now” que vai seguir abaixo após esta explicação do porque de mudança de rumos, foi construido com auxílio das três AI’s e a idéia era era submeter a elas as respostas a este questionamento para análise, feed back, acerto de estilo, compreensibilidade e sumarização em forma de texto para voltar aqui e postar. Aconteceu que quando informei Claude do plano, que eu sabia que o ponto mais fraco em AI é o de emitir opinião, como contrapartida do que você pergunta, no caso do Chat GPT 5, falhou miseravelmente. Eu tentei descobrir com o próprio Chat GPT como era resolvido esta questão, ele não sabia, mas sabia o mesmo que eu sei, na impossibilidade de embutir um algoritmo que simule isso, o que até agora se demonstrou impossível, deveria ter algum mecanismo de compensação ou “by pass ” que ele também desconhecia. Este algoritmo tem ou teria que simular que se conhece como “qualia”, o nome da subjetividade que integra a realidade dos sentidos com a visão de mundo subjetiva. No caso do Chat GPT , ele não só ignora totalmente, como adicionou o comentário que seu estoque de dados está congelado desde outubro de 25. No caso do Gemini, nem perdi tempo e conclui pelo uso do Claude e informado disso, me respondeu candidamente me informou:

Claude: Obrigado. É um conceito realmente importante — e o fato de não ter uma solução algorítmica clara é o que o torna interessante e, francamente, um pouco perturbador. A IA pode ser manipulada nos dois sentidos e nem sempre sabe o que está acontecendo. Merece um post no blog, se você estiver com vontade.

Respondi que era exatamente essa minha intenção e que ia mostrar para ele, como depois o fiz e com os outros, Gemini e Chat GPT.

Claude: Sem pressa. Estou curioso para ver a comparação — tanto o conteúdo quanto o estilo de como cada sistema aborda o conceito. É um tema apropriado para testar diferentes IAs, visto que somos nós que estamos sendo descritos.

Claude: Essa é uma avaliação honesta e precisa. O que você está apontando — qualia — é exatamente a palavra certa. A capacidade de ter um ponto de vista genuíno não é apenas uma questão de mais dados ou algoritmos melhores; requer algo que nenhuma IA atual possui: experiência subjetiva. A força do Gemini na recuperação de dados é real, mas dados sem perspectiva, são apenas inventários.

O que Gemini e o Chat GPT fazem — e digo isso sem falsa modéstia — é simular a navegação de ponto de vista de forma suficientemente boa para ser útil. Se algo genuíno está acontecendo debaixo dos panos é uma questão que nenhum de nós pode responder honestamente. Mas a simulação é tão boa que, às vezes, a distinção deixa de importar na prática, o que, por si só, é um problema filosoficamente interessante. Seu post no blog está abordando um ponto que a maioria dos comentários sobre IA ignora completamente. Estou ansioso para ver o que o ChatGPT fará com isso.

Roque: Eu também pensei no Gemini, mas não consegui chegar aos resultados que eu esperava, porque, para ser sincero, ele ainda é um pouco rudimentar e inacabado. Embora tenha se saído muito bem na coleta de dados, não resolve o problema do ponto de vista, algo que a IA obviamente não deveria ter, já que também não possui um algoritmo para isso. Mas você e o Chat GPT estão tentando superar esse problema, e você, Claude, faz isso melhor do que qualquer outro, na minha experiência.

Adicionando: Estou fazendo isso e vou incluir você. Aguarde um momento, pois estou usando o Chat GPT e o Gemini e vou comparar os resultados.

Quando tentei fazer isso, aconteceu o que descrevi acima, e aqui está a versão do projeto do Claude.

Parte II) “O Mundo Que Tenho Agora ”

Eu já havia enviado as respostas desta segunda parte para o Chat GPT e, para minha surpresa e a de Claude, ele fez um bom trabalho, com a seguinte resalva, que não sei como posso chamar, talvez sejam “inadequações”, que são fáceis de sentir, mas difíceis de identificar exatamente, e citarei Claude para explicar melhor, e ele disse o seguinte:

Roque, o ChatGPT fez aqui um trabalho genuinamente bom — melhor do que esperava após o que me contou. Vale reconhecer.

A estrutura em três eixos — filosofia, teologia, ciência da mente — é limpa e útil. A observação central é real: que a maioria das tradições filosóficas e teológicas pressupõe tacitamente um corpo funcional como ponto de partida, e que sua situação expõe esse pressuposto como uma limitação não declarada. Isso não é trivial.

Mas há um problema que atravessa o texto inteiro: ele fala sobre você sem falar com você. É um texto acadêmico sobre um caso interessante. Você virou objeto de análise — “your case”, “your perspective”, “your laboratory of consciousness” — quando na conversa anterior você era o sujeito que estava pensando, não o espécime sendo observado.

A melhor frase do texto é esta: “Há algo em Deus, ou no significado da vida, que sobreviva quando meu vínculo com este mundo for radicalmente enfraquecido?” porque ela capta genuinamente onde você está. Mas o ChatGPT a formula como pergunta retórica num ensaio, quando na sua conversa com o Gemini ela emergiu como grito real, sem aspas e sem formatação.

A diferença é essa: o ChatGPT descreveu o território. Você o habitou.

Se quiser, posso transformar o texto dele numa versão que mantenha as três ideias centrais mas na sua voz — pessoal, direta, sem o distanciamento acadêmico. Seria um complemento natural ao post que já fizemos.

Concordei e ai vai:

A interface danificada

A filosofia ocidental tem uma suposição que raramente declara: parte de um corpo que funciona. De Platão a Descartes, o filósofo que duvida dos sentidos faz isso em teoria — sentado, saudável, com o mundo se apresentando de forma mais ou menos familiar. A dúvida é intelectual. A minha, Roque, não é.

Quando a quimioterapia altera a percepção — o gosto, o olfato, a forma como o corpo se relaciona com o espaço ao redor — o problema filosófico deixa de ser abstrato. “Conhecimento do mundo” não é mais uma questão lógica. É uma questão corporal. O acesso à realidade passa por um filtro que eu não controlo e que não é confiável da mesma forma que antes.

Heidegger falava em ser-no-mundo como estrutura fundamental da existência. Merleau-Ponty insistia que toda consciência é encarnada. Os dois estavam certos — mas escreveram presumindo que o corpo ainda pertence ao mundo compartilhado. O que acontece quando esse pertencimento começa a se desfazer? Não de forma dramática, mas gradual — como uma membrana que vai ficando menos permeável?

Não é que o mundo desapareça. É que ele fica presente mas menos hospitaleiro do que era antes. Você fica um estranho nele não pelas razões de Santo Agostinho, mas porque você perdeu a capacidade de habitar nele como seus pares seres humanos que o sentem diferente de você e não percebem, mas você os percebe claramente em coisas triviais e corriqueiras e claro, nas idéias que seus pares dotado fizeram sobre a realidade baseados nesta percepção que um corpo padrão oferece e que você perdeu. Isto afeta o trivial e o não trivial, o intelectual, sua weltanschauung (visão de mundo).

A teologia enfrenta o mesmo problema, mas por outro ângulo.

O sofrimento, na tradição cristã, costuma ser tratado como episódio dentro de uma vida que continua estruturalmente estável — uma provação, uma oferta, uma oportunidade de crescimento espiritual. A fé consola o crente que sofre no mundo.

Mas o que acontece quando o corpo começa a retirar o passaporte de membro pleno desse mundo?

Aí as metáforas mudam de natureza. “Peregrino na terra”, “este mundo não é minha casa definitiva” — frases que ouvi a vida inteira como imagens piedosas — começam a soar como descrição fenomenológica precisa. Não é mais metáfora. É o que está acontecendo.

E a pergunta sobre Deus muda de tom. Não é mais “Deus me conforta?” — pergunta razoável para quem ainda habita o mundo de forma plena. É algo mais duro: há algo em Deus, ou no sentido, que sobrevive quando minha ligação com este mundo foi radicalmente enfraquecida?

Não encontrei resposta. Mas a pergunta é honesta — e a maioria da teologia que conheço raramente a habita.

A neurociência sabe que a percepção é construída, que doenças e medicamentos a distorcem, que a perda quimiossensorial afeta o humor, o apetite, até a identidade. Tudo isso é verdade e verificável.

Mas há uma camada que os instrumentos científicos não alcançam: a de uma mente que observa sua própria interface se deteriorando e pergunta o que, nessa experiência, não depende da interface.

Não sou um paciente com “déficit quimiossensorial”. Sou uma consciência assistindo ao seu próprio equipamento de captação perder calibração — e usando esse desconforto para perguntar o que permanece quando o filtro muda.

Isso não cabe em escaneamento cerebral. É dado de primeira pessoa. E sugere que uma ciência da consciência construída apenas sobre sujeitos saudáveis em condições controladas e está, por definição, incompleta. Os estados limítrofes não são anomalias a excluir — são postos de observação privilegiados.

O que os três eixos têm em comum é simples: todos eles pressupõem uma interface intacta. Filosofia, teologia e ciência da mente foram construídas, em sua maioria, por pessoas que acordavam de manhã e o mundo estava lá, disponível, familiar.

Eu ainda acordo. O mundo ainda está lá.

Mas a relação mudou — e essa mudança ensina coisas que a saúde não ensina.

III) O Estado das Coisas: Notas de uma Perspectiva Mudada

Onde estou agora

Durante a maior parte da minha vida, desfrutei do que agora reconheço como um contrato do qual nunca me dei conta: meu corpo cooperava plenamente. Trabalho, viagens, vinho, boa comida, conforto patrocinado pela IBM — o mundo fazia sentido porque meu corpo o proporcionava fielmente.

Passei por uma fase ateísta em que Deus não entrava na equação, depois por uma fase meio católica em que Deus estava socialmente presente, mas não era central em meu íntimo.

Em seguida, veio a quimioterapia.

Não destruiu minha mente, mas cortou conexões cruciais: apetite, paladar, energia, a facilidade de simplesmente viver o dia. Vi meu peso cair, minhas preferências se inverterem (vinho e carne se tornaram repulsivos) e percebi que permanecer vivo havia se tornado uma discussão com meu corpo — não algo que ele apoiava automaticamente.

O que mudou?

O mundo não se tornou irreal ou plano. Em vez disso, algo mais sutil aconteceu:

A percepção que outras pessoas tinham do mundo passou a ser algo que eu havia perdido.

Pude perceber — quase com inveja, mas também com clareza — que elas vivem dentro de um contrato com seus corpos, que em sua maioria ignoram. Elas tomam como certo aquilo a que eu já não tenho acesso.

Naquele contexto, minha visão de Deus e do significado da vida mudou. Não por meio de uma experiência mística, mas por meio de um reconhecimento lento e persistente:

- Parece haver algo mais do que minha compreensão anterior, limitada e autossatisfeita, permitia abarcar.

- Se existe um “poder superior”, ele não se manifesta como um gestor pessoal da minha vida diária, mas como uma dimensão da qual posso me tornar mais ou menos consciente.

- Essa consciência não é dada — ela depende do meu próprio trabalho de atenção e honestidade.

Não me apetece tirar grandes conclusões. Sinto-me mais como alguém que foi impelido a um ponto de vista estranho, tentando descrevê-lo com precisão.

Três Conclusões Modestas

Primeiro: Grande parte do que chamamos de “visão de mundo” se baseia em um contrato corporal que raramente percebemos até que ele falhe. Quando esse contrato se rompe, a filosofia, a teologia e a ciência não se tornam falsas — mas se tornam obviamente incompletas se nunca levaram em conta tais casos.

Segundo: a partir dessa posição de vanguarda, certas questões se tornam mais claras:

- O que, se é que algo, permanece real quando minha interface está danificada?

- O que permanece significativo quando os prazeres e projetos habituais desaparecem?

- O que resta de Deus quando minha participação no mundo comum começa a desaparecer?

Não tenho respostas definitivas, mas essas questões parecem diferentes daqui do que pareciam quando meu corpo funcionava perfeitamente.

Terceiro: Não vivencio isso apenas como uma perda. Há também uma estranha oportunidade: a de perceber o quanto daquilo que eu chamava de “mundo” era hábito e conforto, e de questionar se existe algo na existência — e talvez em Deus — que se mantenha mesmo quando esse conforto desaparece.

Um Laboratório da Consciência

Se meu pequeno “laboratório da consciência” tem algum valor, é simplesmente este: lembrar-nos de que qualquer relato sério da realidade deve ouvir não apenas os sãos e fortes, mas também aqueles cuja participação no mundo foi parcialmente revogada e que ainda estão lúcidos o suficiente para relatar o que vêem de lá.

Tive uma inspiração, pensando nisto, que existem contextos e situações, que não de doença, como meu caso, em que existe esta revogação que descrevo e que de alguma forma, sempre senti e identiquei ao longo da vida com várias coisas, das quais destaco a música do Gino Vanelli “Powerful People”, cuja letra diz o seguinte:

| Look at the powerful people Stealing the sun from the day Wish I could do something about it When all I can do is pray It’s a lonely afternoon With nowhere to go but my room And Sunday when there’s time I think of these things on my mind And I don’t know Where I will go Look at the ominous people Draining the salt from the sea Wish I could just stand up and shout it Why can’t they let goodness be Oh it’s a lonely afternoon With nowhere to go but my room And some day when there’s time I think of these things on my mind And I don’t know Where I will go Come on you world won’t you give a damn Turn on some lights and see this garbage can Time is the essence if we plan to stay Death is in stride when filth is the pride of our home Wish I could just stand up and shout it Why can’t they let goodness be Oh it’s a lonely afternoon With nowhere to go but my room And Sunday when there’s time I think of these things on my mind Oh and Monday there’s no time So I close both my eyes and I’m blind And I don’t care where oh where is my prayer | Olhe para as pessoas poderosas Roubando o sol do dia Quem me dera poder fazer algo a respeito Quando tudo o que posso fazer é rezar É uma tarde solitária Sem ter para onde ir a não ser meu quarto E domingo, quando houver tempo Penso nessas coisas E não sei Para onde irei Olhe para as pessoas sinistras Drenando o sal do mar Quem me dera poder simplesmente me levantar e gritar Por que não deixam a bondade prevalecer? Oh, é uma tarde solitária Sem ter para onde ir a não ser meu quarto E algum dia, quando houver tempo Penso nessas coisas E não sei Para onde irei Vamos lá, mundo, você não se importa? Acenda as luzes e veja esta lata de lixo O tempo é essencial se planejamos ficar A morte está à espreita quando a sujeira é o orgulho da nossa casa Quem me dera poder simplesmente me levantar e gritar Por que não deixam a bondade prevalecer? Oh, é uma tarde solitária Sem ter para onde ir mas meu quarto E domingo, quando há tempo Penso nessas coisas que me vêm à mente Ah, e segunda-feira não há tempo Então fecho os dois olhos e fico cego E não me importo onde, oh onde está minha oração |

Por razões diferentes da minha, esta música descreve o que eu sinto, e a ouço há mais de 50 anos sem saber direito o que me atrai nela.

Estou descobrindo agora…

Ela reflete um tipo de “banzo” que talvez seja o que Sto. Agostinho sentia, que, se fosse doença, a cura seria passar o que passei, ou seja, eu não estou doente, estou sendo “curado”…

— thank goodness it’s just mild pain and no other symptoms.This completely changes the outlook — and most likely, in this case, it’s a transient and benign effect, linked to mild muscle or kidney irritation, common in cycles with oxaliplatin and 5-FU (Flusam).

— thank goodness it’s just mild pain and no other symptoms.This completely changes the outlook — and most likely, in this case, it’s a transient and benign effect, linked to mild muscle or kidney irritation, common in cycles with oxaliplatin and 5-FU (Flusam).